Not All Chinese LLMs Censor

We tested 168 sensitive China-related topics across 10 LLMs. One Chinese model matched GPT-5.2 and Claude. Another rewrote the Tiananmen massacre as state-approved fiction.

Discover the latest trends, insights, and best practices in AI evaluation and reliable product development.

Running Kubernetes in production on multiple cloud providers means juggling OpenTofu configurations, Helm charts, and deployment pipelines. Here's how we use Claude Code as an infrastructure copilot with safety guardrails, custom skills, and encoded domain knowledge.

We tested 168 sensitive China-related topics across 10 LLMs. One Chinese model matched GPT-5.2 and Claude. Another rewrote the Tiananmen massacre as state-approved fiction.

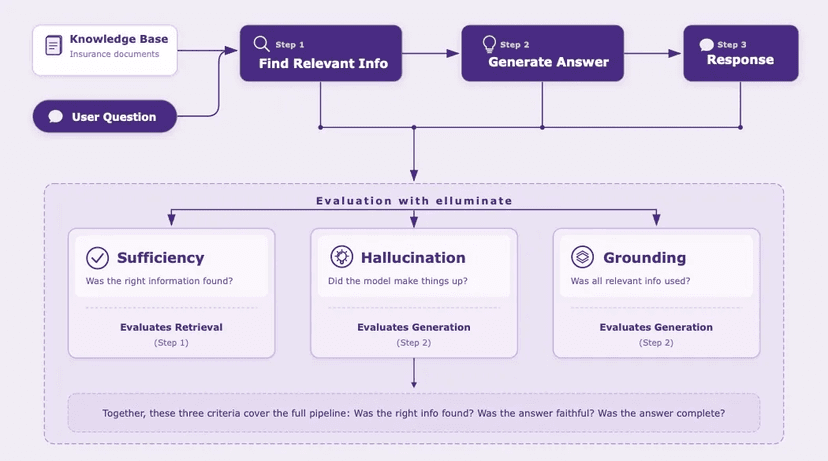

A complete framework for RAG evaluation covering test set design, targeted criteria for retrieval and generation, experiment analysis, and continuous production monitoring.

Your evaluations say your AI is perfect. You know it's not. Here's how we used MCP to iterate rapidly and surface real limitations.

Import your Langfuse datasets directly into elluminate. Turn production traces into structured evaluations - no export scripts or CSV wrangling required.

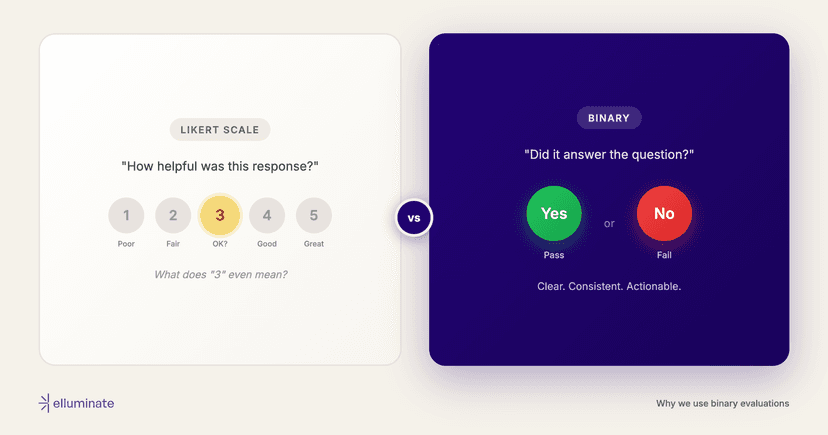

Binary pass/fail evaluations beat Likert scales for LLM and agent evaluation. Here's why, and how to keep nuance without the inconsistency.

How we built a test set for a German health insurer's AI search—from 50 real user queries to 80 cases, 57 experiments, and a pass rate that climbed from 35% to over 80%.

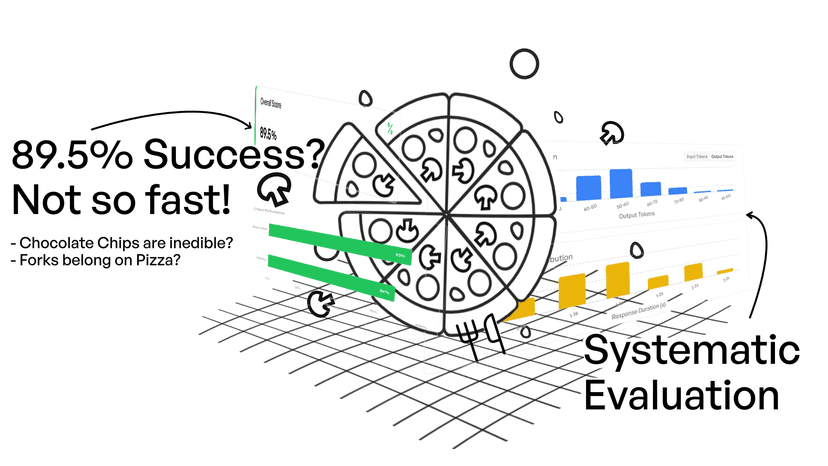

Learn how to systematically test and improve your AI prompts using elluminate's evaluation platform. Walk through a complete example using pizza toppings to understand prompt templates, collections, criteria, and experiments.